Computing has evolved from rigid, rule-based systems to intelligent models capable of handling uncertainty. But how do machines find the best solution when there are millions of possibilities? This is where the genetic algorithm in soft computing plays a key role.

Understanding Soft Computing: The Foundation of Intelligent Optimization

Traditional computing systems are designed to produce exact results using fixed rules and precise inputs. However, real-world problems are rarely simple or fixed. They often involve uncertainty, incomplete information, and constantly changing conditions. Soft computing was developed to address these limitations by enabling systems to produce approximate but practical solutions.

Soft computing is not a single technique, but a combination of intelligent approaches, including neural networks, fuzzy logic, swarm intelligence, and genetic algorithms.

What are Genetic Algorithms in Soft Computing?

Genetic algorithms in soft computing are problem-solving techniques inspired by the famous theory of natural selection. As species evolve over generations through selection and adaptation, genetic algorithms improve solutions step by step until they reach the most effective outcome. Instead of relying strictly on predefined logic, these algorithms generate multiple possible solutions and evaluate how well each one performs.

The strongest solutions are then selected, combined, and slightly modified to create better versions in the next cycle. Over time, this evolutionary process helps the system move closer to the best possible answer.

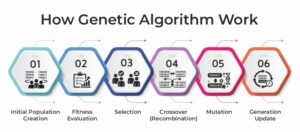

How Does a Genetic Algorithm Work: Step-by-Step Process

The strength of genetic algorithms in soft computing lies in their evolutionary process. They don’t guess a solution randomly; they improve solutions systematically over multiple generations.

1. Initial Population Creation

The process begins by generating a group of possible solutions, known as a population. Each solution, often called a chromosome, represents a potential answer to the question. These solutions are usually created randomly to ensure diversity.

2. Fitness Evaluation

Each chromosome is evaluated using a fitness function. This function measures how good or effective the solution is. When the solution performs better, it gets a higher fitness score.

3. Selection

High scoring solutions are more likely to be selected for the next step. This step mimics natural selection. Stronger solutions have a higher chance of passing their characteristics to the next generation.

4. Crossover (Recombination)

Selected solutions are then combined to create a new offspring. During crossover, parts of two parent solutions are merged to produce improved variations. This helps the algorithm explore better possibilities.

5. Mutation

To maintain diversity and avoid stagnation, small random changes are introduced into some solutions. Mutation prevents the algorithm from getting stuck in limited solution areas and encourages exploration.

6. Generation Update

The newly created solutions replace the older population, and the process repeats. With each generation, the overall quality of solutions improves.

This cycle continues until the algorithm finds a satisfactory or near-optimal solution. Through this evolutionary approach, genetic algorithms in soft computing efficiently solve complex optimization problems that are difficult to address using traditional methods.

Solving What Traditional Methods Cannot

Genetic algorithms in soft computing are important in solving optimization problems where traditional methods struggle. In many real-world scenarios, the goal is not just to find a solution, but to find the best possible solution among thousands or even millions of alternatives. This is where genetic algorithms prove to be highly effective.

One of the biggest strengths is global search capacity. This reduces the risk of getting trapped in a local optimum and increases the chances of discovering a better overall solution. They do not require any mathematical models; they can optimize systems even when relationships between variables are unclear or constantly changing.

Another important strength is adaptability. As generations evolve, the algorithm continuously refines solutions based on performance. This makes it suitable for dynamic environments such as supply chain management, robotics, financial modeling, and machine learning optimization.

For example, in route optimization for delivery services, a genetic algorithm can evaluate thousands of route combinations and evolve the most cost-efficient and time-saving path over multiple generations.

Advantages and Limitations of Genetic Algorithms in Soft Computing

| Advantages | Limitations |

| Efficiently explores large and complex search spaces | Computationally intensive for large problems |

| Capable of approaching global optimal solutions | Does not guarantee the absolute best solution |

| Handles non-linear and multi-variable problems effectively | May converge prematurely to suboptimal solutions |

| Works well for multi-objective optimization | Slower than traditional methods for simple problems |

| Evaluates multiple solutions simultaneously (parallel search) | Requires multiple iterations to reach good results |

The Next Era of Optimization Technologies!

As industries move toward automation, artificial intelligence, and data-driven decision-making, the need for systems that can adapt, learn, and optimize in uncertain environments will only grow stronger. In the coming years, as problems become more complex and datasets more dynamic, organizations will increasingly rely on such adaptive methods to stay competitive and innovative.

The future of optimization will not be defined by rigid formulas, but by intelligent systems capable of evolving with change, and genetic algorithms will play a transformative role in shaping smarter, more resilient technologies.

Continue exploring more insights on emerging technologies and trends by reading our latest blogs.

FAQs:

Q1. What is the Difference Between GA and Traditional Algorithms?

Answer: Genetic algorithms are based on probabilities. They use population-based search and do not require gradient information. Traditional algorithms require gradient information and are often deterministic.

Q2. What are chromosomes and genes?

Answer: Chromosome is a candidate solution, and genes are the components of that solution.

Read More: