There are sci-fi movies where computers instantly recognize objects in their view. This has been here for years already. It is changing everything from self-driving cars to security systems. At the heart of this visual revolution sits YOLO (You Only Look Once), perhaps the most influential object detection framework of our time.

It could transform a simple webcam into an intelligent observer, capable of distinguishing between a wandering deer and a neighborhood dog in milliseconds. This guide unpacks everything about it, not just its technical foundations, but also the theory and the real-world applications.

What is YOLO Object Detection?

YOLO flipped the script on traditional object detection when it burst onto the scene in 2015. It adopts a more sophisticated approach than previous techniques that laboriously scanned photos repeatedly using sliding windows (painfully slow).

It divides images into a grid and predicts bounding boxes and class probabilities simultaneously in a single forward pass through its neural network. This single-shot architecture enables it to achieve those jaw-dropping processing speeds of 45-155 frames per second that make real-time detection possible.

The secret sauce lies in its convolutional neural network backbone (typically Darknet) and its anchor-based prediction system. It spots pedestrians in autonomous vehicles, counts products on retail shelves and handles the unpredictable real world in ways that more specialized algorithms simply can’t match.

Know About its Architecture

YOLO’s architecture resembles a sandwich of computational layers that transform raw pixels into actionable predictions. As the feature extractor, the backbone- usually a Darknet variant- transfers incoming images through a sequence of convolutional layers that progressively reduce visual information to increasingly abstract representations.

These features then feed into the neck (often featuring components like FPN or PANet), which creates a feature pyramid that helps the network handle objects of wildly different sizes. Remember those frustrating early object detectors that completely missed small objects? This multi-scale approach is what finally solved that headache.

How Does YOLO Object Detection Work?

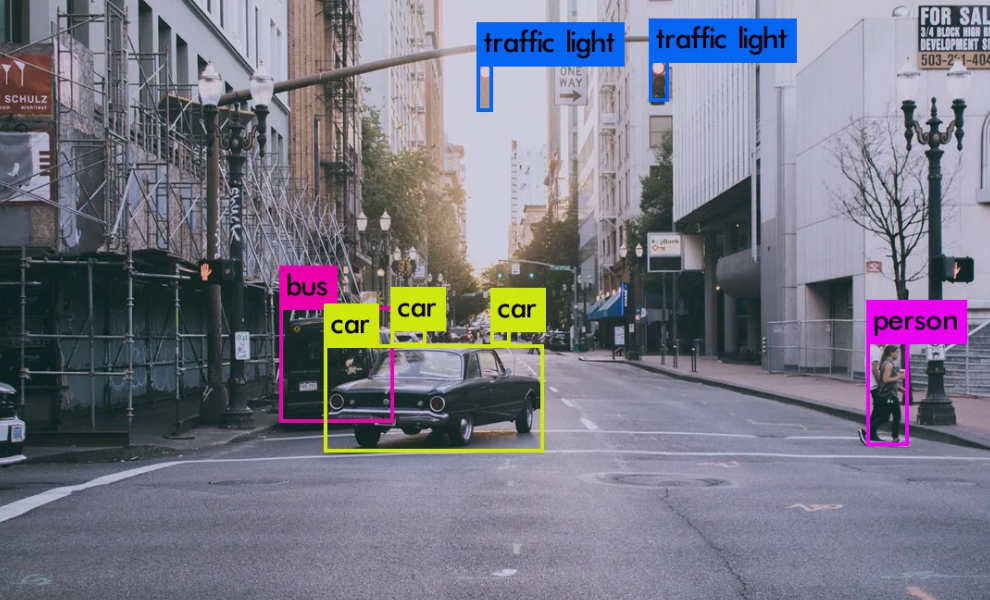

Its grid-based detection method is where its operational genius starts. After splitting the input image into a SxS grid (usually 13×13 in YOLOv3), the algorithm assigns each cell the task of identifying items that are centered inside its borders.

It predicts B bounding boxes per grid cell, each with five components: x, y coordinates, width, height, and a confidence score, as opposed to thoroughly searching every potential site. This confidence indicates the accuracy of the box dimensions as well as the probability that an object exists.

Simultaneously, each grid cell predicts conditional class probabilities (Pr(Class|Object)) that answers the crucial question: assuming something’s here, what exactly is it? The entire prediction happens in one forward pass, a computational sprint rather than the marathon required by two-stage detectors.

It uses a multi-part loss function that simultaneously penalizes coordinate errors, dimension errors, confidence errors, and classification errors. The network learns to balance these competing objectives through backpropagation across thousands of images. Non-Max Suppression (NMS) handles the final cleanup, eliminating redundant boxes by suppressing those with lower confidence scores.

Real World Applications

Autonomous Vehicles: Its variations are used by Tesla, Waymo, and other self-driving pioneers to identify traffic signs, cars, and pedestrians with millisecond delay. The algorithm is essential for making safety-critical decisions while driving since it can interpret lidar-camera fusion data in real time.

Retail Analytics: Walk into any major store today, and YOLO-based systems are silently counting inventory, tracking how shoppers move through aisles, and generating those heat maps marketing teams obsess over. Store managers particularly love how these systems catch potential theft situations without constant human monitoring – something traditional cameras never quite manage.

Wildlife Conservation: Ecologists have embraced this system for monitoring endangered species across vast wilderness areas using drone footage and trail cameras. The algorithm’s low false positive rate when properly trained has revolutionized population counts that once required thousands of human hours to complete.

Manufacturing Quality Control: Factory production lines now incorporate these detectors to spot defects in everything from microchips to automobile parts at speeds unattainable by human inspectors. The system’s ability to run on specialized edge computing devices allows for distributed inspection without costly central processing.

Conclusion

We’re getting close to a turning point when machines will do more than merely detect things, as transformer architectures and self-supervised learning continue to converge with YOLO’s efficient design philosophy. In visual scenes, they will learn linkages, contexts, and intentions.

The computational barriers are falling rapidly, and with newer, smaller YOLO variants running on everything from smartphones to satellites, we’re witnessing the democratization of computer vision capabilities that seemed like science fiction just a decade ago. The algorithm that once amazed us by simply identifying cats might soon understand exactly what that cat intends to do next.

For additional information on topics pertaining to the most recent technological advancements, visit HitechNectar.