OpenAI makes yet another advancement in the AI race with the introduction of GPT-5.4 mini and nano. The AI giant calls the new models the ‘most capable small model’ to date, designed to handle high-volume workloads faster and more efficiently. Whether it is coding, reasoning, multimodal understanding, or tool use, the newly launched models can execute it all at 2x speed.

GPT‑5.4 Mini and Nano have demonstrated strong performance across multiple benchmarks, nearing the score levels of the larger GPT‑5.4 model. The models can streamline product experiences that accompany significant latency, such as coding assistants that require feeling responsive and subagents that quickly execute supporting tasks.

Additionally, the models can substantially fasten tools such as computer-using systems that capture and interpret screenshots and multimodal apps with the ability to reason over images in real time.

So, let us dive deeper and analyze the latest models alongside learning about their availability and pricing. Here we go…

What’s New in OpenAI’s GPT-5.4 mini and nano?

Optimized for fast and efficient coding and subagents, GPT-5.4 mini and nano are set to redefine the way AI models perform. Both models chiefly focus on coding, subagents, and computer use, all at 2x speed. In coding, the models streamline coding workflows, especially while executing repetitive steps quickly.

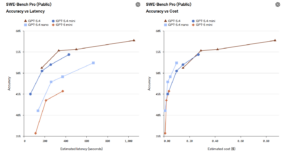

In coding, the new models can address targeted edits, codebase navigation, front-end generation, and debugging code cycles. GPT-5.4 mini and nano enable developers to execute these tasks faster and at lower costs. When it comes to balancing performance-per-latency in coding workflows, GPT-5.4 mini outperforms GPT-5 mini.

Here is a depiction of different levels of accuracy, latency, and cost of GPT-5.4, GPT-5.4 mini, nano, and GPT-5 mini in the benchmark of SWE-Bench Pro.

Source: OpenAI

Source: OpenAI

The newly launched model by OpenAI, especially GPT-5.4 mini, is highly suitable for systems that merge models of different sizes. For example, in Codex, GPT-5.4 can execute planning, coordination, and ultimate judgement, while GPT-5.4 mini can manage smaller subtasks, including searching for a codebase, reviewing a large file, and assessing supporting documents simultaneously. Such an approach enables developers to utilize smaller models for faster and more efficient outcomes.

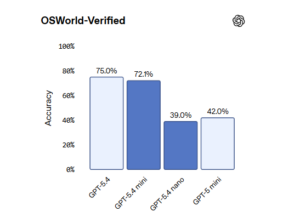

Apart from that, GPT-5.4 mini is highly efficient for multimodal tasks that include computer use. It is capable of analyzing screenshots from information-rich interfaces, while advancing computer use faster than ever. In the OSWorld-Verified assessment, GPT-5.4 mini is found nearing GPT-5.4 and surpassing GPT-5 mini.

Here is how GPT-5.4 mini and nano performed across leading coding, tool-calling, intelligence, vision, and long context benchmarks-

Source: OpenAI

Source: OpenAI

Understanding the Features of GPT-5.4 mini:

GPT-5.4 mini combines the capabilities of GPT-5.4 into smaller and more efficient ones, helping developers in tasks that require responsiveness. This approach makes GPT‑5.4 Mini highly effective for coding, reasoning, multimodal understanding, and tool use, while operating at twice the speed.

Here are the key features to look for:

Text and image inputs: GPT-5.4 mini can streamline multimodal experiences by merging prompts with images and screenshots.

Tool use and function calling: For agentic workflows, the model orchestrates tools and APIs.

Web and file search: The model connects AI agents with external and internal data sources for higher accuracy across complex tasks.

Computer use: GPT-5.4 mini enables software-interaction cycles, analyzing UI state. This helps the model to take accurate and controlled actions.

Apart from these capabilities, GPT-5.4 mini can support and advance several other areas, including-

Developer copilots and coding assistants: GPT-5.4 mini offers latency-sensitive coding, which is crucial for code review suggestions and faster integration cycles. It fastens coding assistants and developer copilots.

Multimodal developer workflows: The new model can be highly beneficial for tools that process screenshots, understand UI state, and interpret images for coding and debugging cycles.

Computer use subagents: The model is also set to support fast executor subagents that are designed to take precise and controlled actions in applications, while a planner model synchronizes a larger agent cycle.

Exploring the Features of GPT‑5.4 nano:

GPT-5.4 nano is designed specifically for low-latency and low-cost API which can be significantly advantageous across turnaround tasks, including classification, ranking, and extraction. It is smaller and faster than GPT-5.4 mini and is capable of offering high throughput.

Improved instruction following: GPT-5.4 nano boasts consistent and strong instruction following while understanding the developer’s intent through well-defined interactions.

Function and tool calling: The model enables reliable shifts and activation of tools and APIs for lightweight automation and agents.

Coding support: The new model supports general coding tasks that require faster turnarounds.

Image understanding: GPT-5.4 nano can execute basic image interpretation with text.

Low-cost and low latency: The new model is capable of generating quick and efficient responses at scale.

GPT-5.4 nano can be substantially beneficial for the following tasks:

Classification and intent detection: The model can execute intent identification and classification through fast labeling for high-volume requests.

Extraction and normalization: GPT-5.4 nano can extract text, verify it, and normalize it while generating standardized responses.

Ranking and prioritization: The model can be used to reorder elements in a list, prioritize the urgent tasks, and streamline the process with limited latency.

High-volume text processing: The model enables batch transformation when it comes to text processing. It can also address batch cleanup, deduping, and normalization.

When Should You Choose Which Model?

GPT-5.4 mini can streamline workload across real-time agents, developer tools, and retrieval-augmented applications. So, using this model for interactive systems can be highly beneficial while looking for balanced reasoning and low latency.

GPT-5.4 nano is capable of addressing real-time interaction, high-volume request routing, and simple automation. It will be highly beneficial for workloads requiring ultra-low latency.

Availability and Pricing of GPT-5.4 mini and nano

GPT‑5.4 mini

OpenAI made GPT‑5.4 mini available across API, Codex, and ChatGPT since its launch on 17 March 2026. The model supports text and image inputs for tool use, function calling, file search, web search, computer use, and skills. Here, the model has a 400k context window, costing $0.75 per 1 million input tokens and $4.50 per 1 million output tokens.

Similarly, in Codex, GPT‑5.4 mini is available in the app, the CLI, the IDE extension, and the web. Developers can execute simpler coding tasks and less reasoning-intensive work at around one-third the cost of GPT-5.4.

While in ChatGPT, both Free and Go users can use GPT-5.4 mini through the ‘Thinking’ feature in the + bar. It is available at a rate limit for all ChatGPT users.

GPT-5.4 nano

GPT-5.4 nano is available in the API only as of now, at $ 0.20 per 1 million input tokens and $ 1.25 per 1 million output tokens.

Concluding Remarks!

GPT-5.4 mini and nano are set to advance your coding, subagent, and computer use workloads with exceptional speed and higher efficiency. Leaders across the industry have applauded the performance of these small models, including Hebbia, CodeRabbit, Mercor, and GitHub.

However, these models will be efficient for smaller workloads and subtasks. Bigger coding and multimodal tasks will require the integration of advanced models, such as GPT-5.4. You can now use GPT-5.4 mini across API, Codex, and ChatGPT, while GPT-5.4 nano is available only in API.

Was this blog informative? If so, then read our expert-led blogs and stay ahead in the tech-driven era!

FAQs:

Q1. Is ChatGPT 5 free?

Answer: Yes, ChatGPT-5 is available to free users.

Q2. Is GPT-5 mini API free?

Answer: No, GPT-5 mini is not free. It is available at $0.25 per million input tokens and $2 per million output tokens.

Q3. What’s better, GPT-4 or 5?

Answer: GPT-5 is the upgraded version of GPT-4. GPT-5 is capable of consistently generating more authentic, adaptable, human-like, and faster outputs than GPT-4.

Recommended For You:

OpenAI Introduces GPT-5.3-Codex: Its Most Capable Agentic Coding Model

OpenAI’s O3 Model: A Leap Toward AGI with Human-Like Problem-Solving Abilities